What Is Time Complexity in Data Structures?

Why does one search operation take milliseconds while another takes seconds? Why do some programs slow down drastically as the amount of data increases?

The answer often lies in time complexity, which measures how the running time of an algorithm grows as the input size increases. Instead of focusing on actual execution time, it helps developers estimate performance based on the number of operations.

Understanding time complexity is essential in data structures because it helps in choosing efficient algorithms, writing optimized code, and building scalable applications. It is also one of the most commonly tested concepts in technical interviews, where candidates are expected to analyze and improve solutions.

In this article, you will learn what time complexity means, why it matters in DSA, how interviewers evaluate it, and how to calculate it using simple methods.

What Is Time Complexity?

Time complexity measures how the number of operations performed by an algorithm increases as the input size grows. Instead of measuring actual execution time in seconds, it focuses on how performance scales with larger inputs.

This helps developers compare algorithms and choose the most efficient solution without depending on hardware or programming language differences.

Time Complexity vs Actual Running Time

Many beginners confuse time complexity with actual running time. However, actual runtime can vary due to several external factors, while time complexity provides a standardized way to measure algorithm efficiency.

| Factor | Why runtime differs |

| Hardware | Faster processors execute instructions more quickly than slower systems |

| Compiler | Some compilers optimize code better, improving execution speed |

| Programming Language | Languages like C execute faster than Python due to lower-level implementation |

| Input Size | Time complexity focuses on how performance changes as the data size increases |

This is why time complexity is considered a more reliable way to evaluate algorithm efficiency than measuring execution time directly.

Why Time Complexity Matters in Data Structures

Time complexity plays an important role in data structures because it helps developers understand how efficiently operations like searching, inserting, and deleting data can be performed.

Some key reasons why time complexity matters include:

1. Choosing the right data structure

Time complexity helps in selecting the most suitable data structure for a problem.

Example: Searching an element in an array takes O(n) time, while searching in a HashMap typically takes O(1) time, making it a better choice for frequent lookups.

2. Improving scalability

Inefficient algorithms may work well with small data but fail when the data grows.

Example: An O(n²) algorithm may work fine for 1,000 records but becomes extremely slow when processing millions of records.

3. Optimizing operations

Understanding time complexity helps developers choose data structures that perform operations efficiently.

Example: Stacks and queues allow insertion and deletion in O(1) time, while arrays may require O(n) time due to shifting elements.

4. Interview evaluation criteria

Time complexity often determines whether a solution is considered optimal in technical interviews.

Interviewers usually expect candidates to:

- Analyze the time complexity of their solution

- Suggest improvements

- Compare multiple approaches

- Optimize inefficient code

This is why understanding time complexity is essential for both software development and interview preparation.

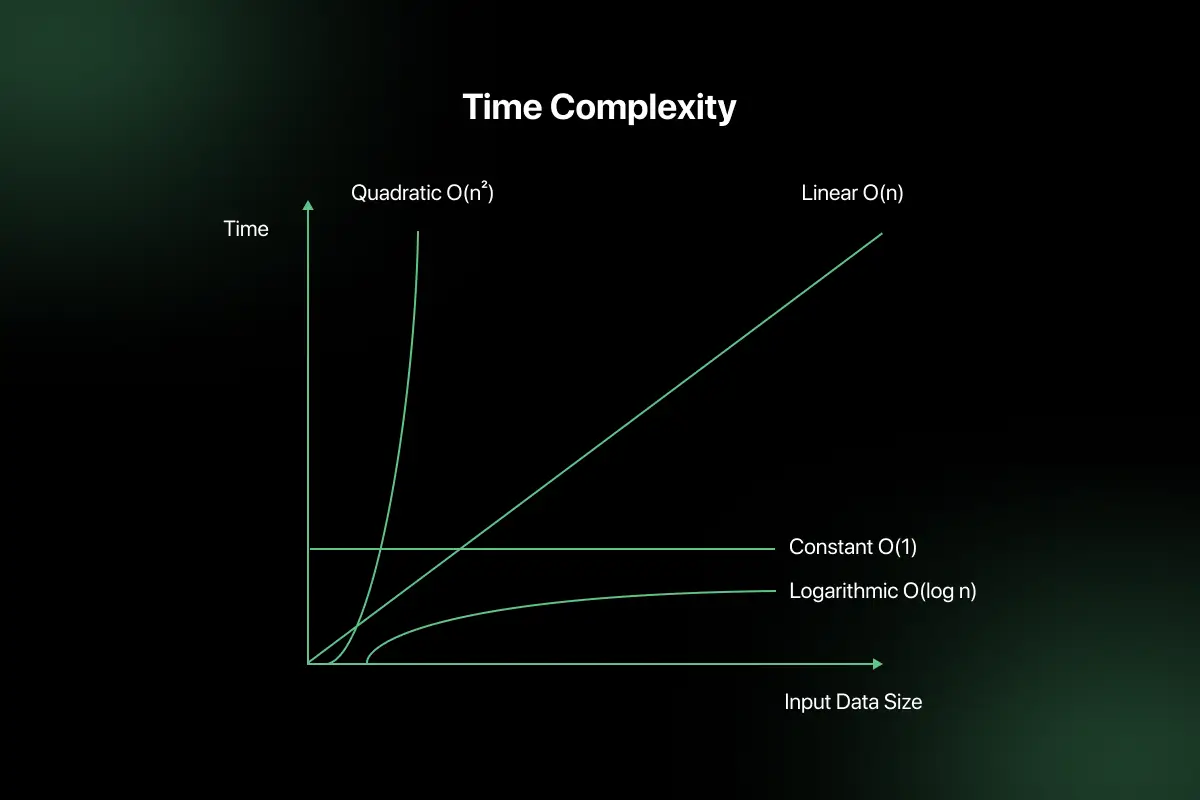

Common Time Complexities in Data Structures

Time complexity is commonly expressed using Big O notation to describe how the number of operations increases with input size. Understanding these common complexity types helps in evaluating the efficiency of algorithms and data structure operations.

1. Constant Time – O(1)

Constant time means the operation takes the same amount of time regardless of input size.

Examples:

- Array index access

- Stack push and pop operations

2. Logarithmic Time – O(log n)

Logarithmic time means the number of operations increases slowly because the problem size reduces at each step.

Examples:

- Binary search

- Operations in balanced trees like AVL trees

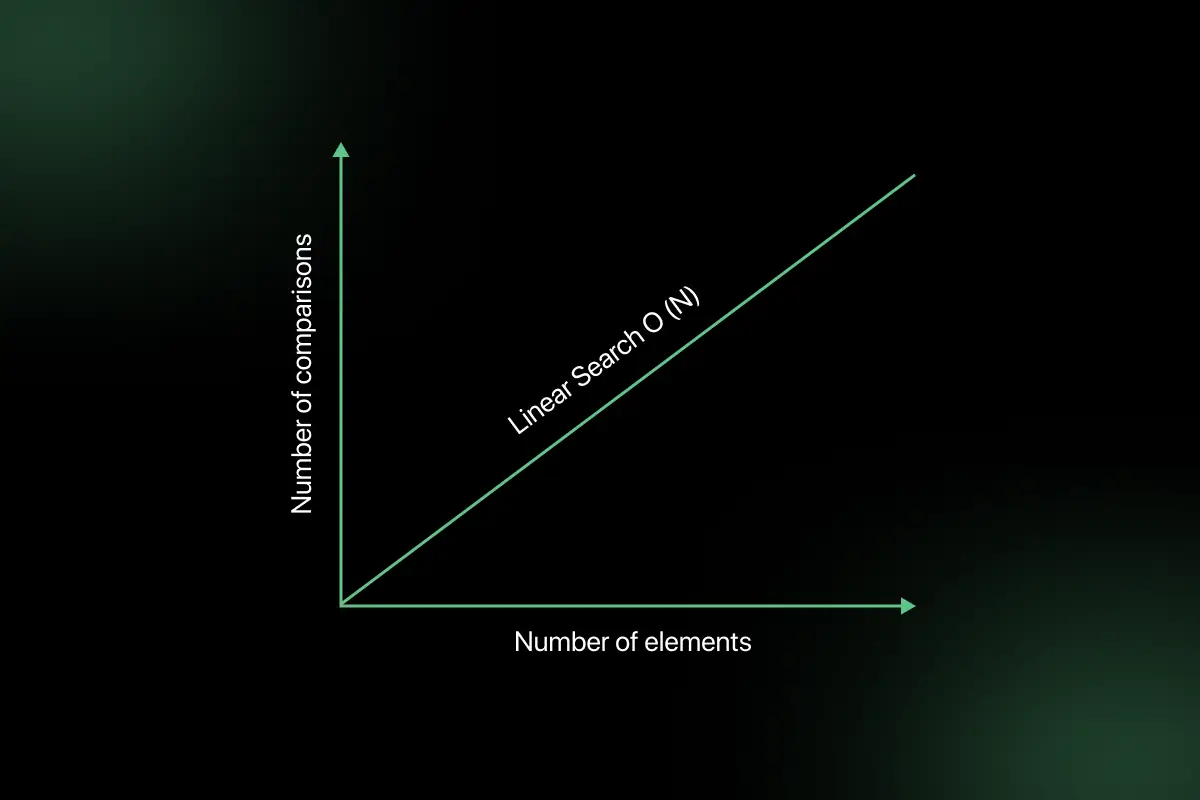

3. Linear Time – O(n)

Linear time means the number of operations increases directly with the input size.

Examples:

- Linear search

- Traversing an array or linked list

4. Linearithmic – O(n log n)

Linearithmic complexity occurs when an algorithm combines linear work with a logarithmic division of the problem.

Examples:

- Merge sort

- Heap sort

5. Quadratic – O(n²)

Quadratic time occurs when operations grow proportionally to the square of the input size, usually due to nested loops.

Examples:

- Nested loop comparisons

- Bubble sort

Time Complexity of Common Data Structure Operations

Different data structures provide different performance benefits depending on the operation being performed. Understanding these differences helps developers choose the right data structure based on access, search, insertion, and deletion requirements.

The following table shows the typical time complexity of common operations:

| Data Structure | Access | Search | Insert | Delete |

| Array | O(1) | O(n) | O(n) | O(n) |

| Linked List | O(n) | O(n) | O(1) | O(1) |

| Stack | O(n) | O(n) | O(1) | O(1) |

| Queue | O(n) | O(n) | O(1) | O(1) |

| Hash Table | N/A | O(1) average | O(1) average | O(1) average |

| Binary Tree | O(n) | O(n) | O(n) | O(n) |

| Binary Search Tree (BST) | O(log n) average | O(log n) | O(log n) | O(log n) |

How to Calculate Time Complexity (Practical Method)

Time complexity can be calculated by systematically analyzing how many times an operation executes as the input size increases. Instead of guessing, following a clear method makes the process easier and more accurate.

Example Problem

Print all possible pairs from an array.

Code:

for(i = 0; i < n; i++)

{

for(j = 0; j < n; j++)

{

print(arr[i], arr[j]);

}

}

Step-by-Step Analysis

- Step 1: Identify input size: The input size is n because both loops depend on the number of elements.

- Step 2: Count loop executions: The outer loop runs n times.

- Step 3: Multiply nested loops: For each outer loop iteration, the inner loop also runs n times. Total operations = n × n = n².

- Step 4: Drop constants: If the code had operations like 2n² or 3n², constants would be ignored because growth rate matters more.

- Step 5: Pick the highest growth term: If the total operations were n² + n, we only consider n² because it grows faster.

Result Summary

| Factor | Result |

| Input size | n |

| Outer loop runs | n times |

| Inner loop runs | n times |

| Total operations | n × n = n² |

| Time Complexity | O(n²) |

Following this structured approach makes it easier to analyze any algorithm and aligns with the method expected in technical interviews.

Time Complexity Analysis of Common Code Patterns

Instead of memorizing complexities randomly, it is easier to recognize common coding patterns and understand their typical time complexity. This approach helps quickly estimate complexity during interviews and coding practice.

1. Single loop pattern

A single loop that runs from start to end usually results in linear complexity because the number of operations increases directly with input size.

Example:

- Array traversal

- Finding the maximum element

- Linear search

Typical complexity: O(n)

2. Nested loop pattern

When one loop runs inside another, the total number of operations increases, often resulting in quadratic complexity.

Example:

- Comparing all pairs in an array

- Bubble sort

- Duplicate detection using two loops

Typical complexity: O(n²)

3. Logarithmic pattern

Algorithms that repeatedly reduce the problem size, usually by half, follow logarithmic complexity.

Example:

- Binary search

- Operations in balanced binary search trees

- Divide the search range repeatedly

Typical complexity: O(log n)

4. Recursive pattern

Recursive algorithms often follow divide-and-conquer approaches, where a problem is broken into smaller parts.

Example:

- Merge sort

- Quick sort

- Tree traversals

Typical complexity depends on recursion structure but commonly:

- O(n log n) for divide and conquer

- O(n) for simple recursion

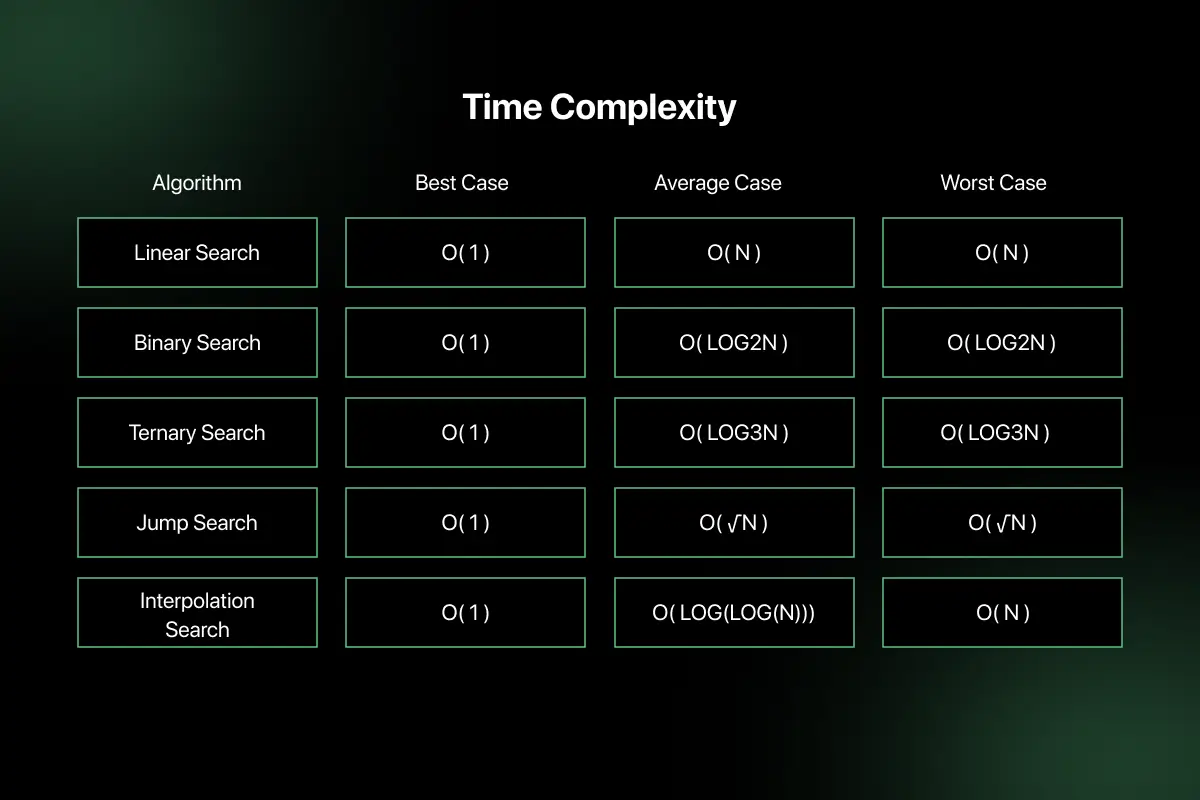

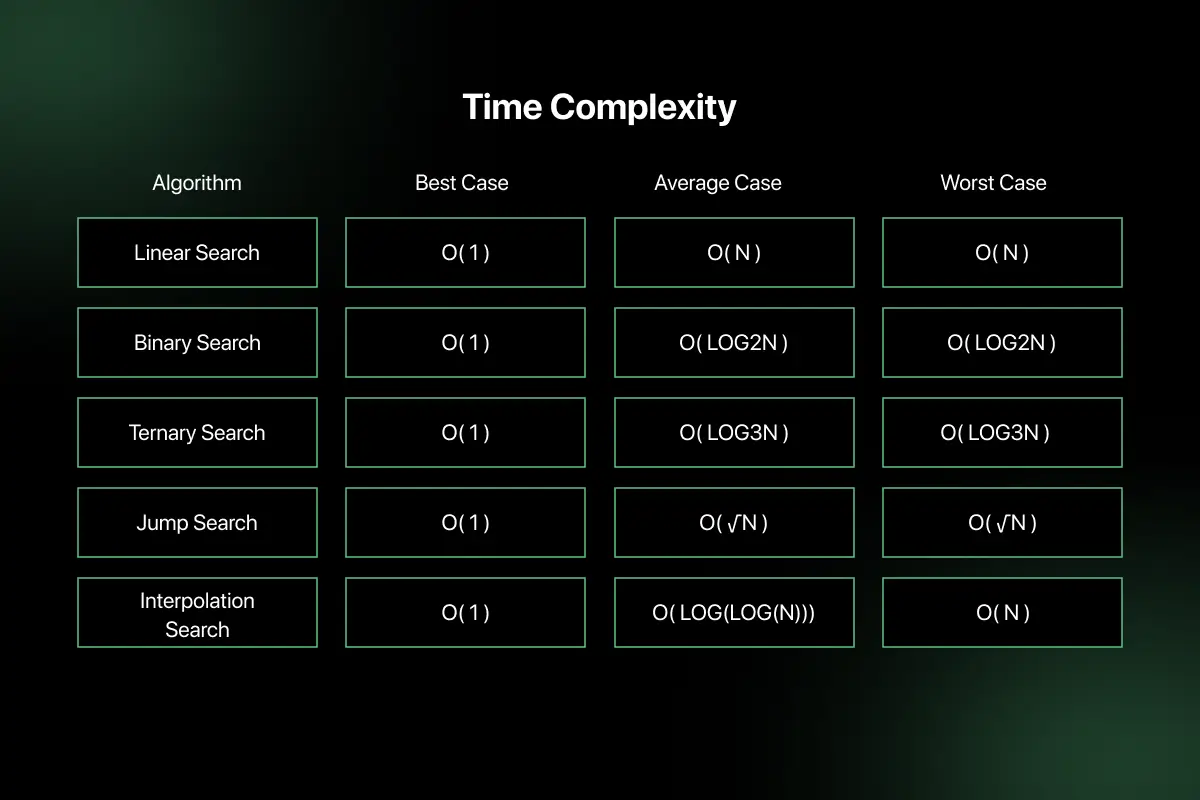

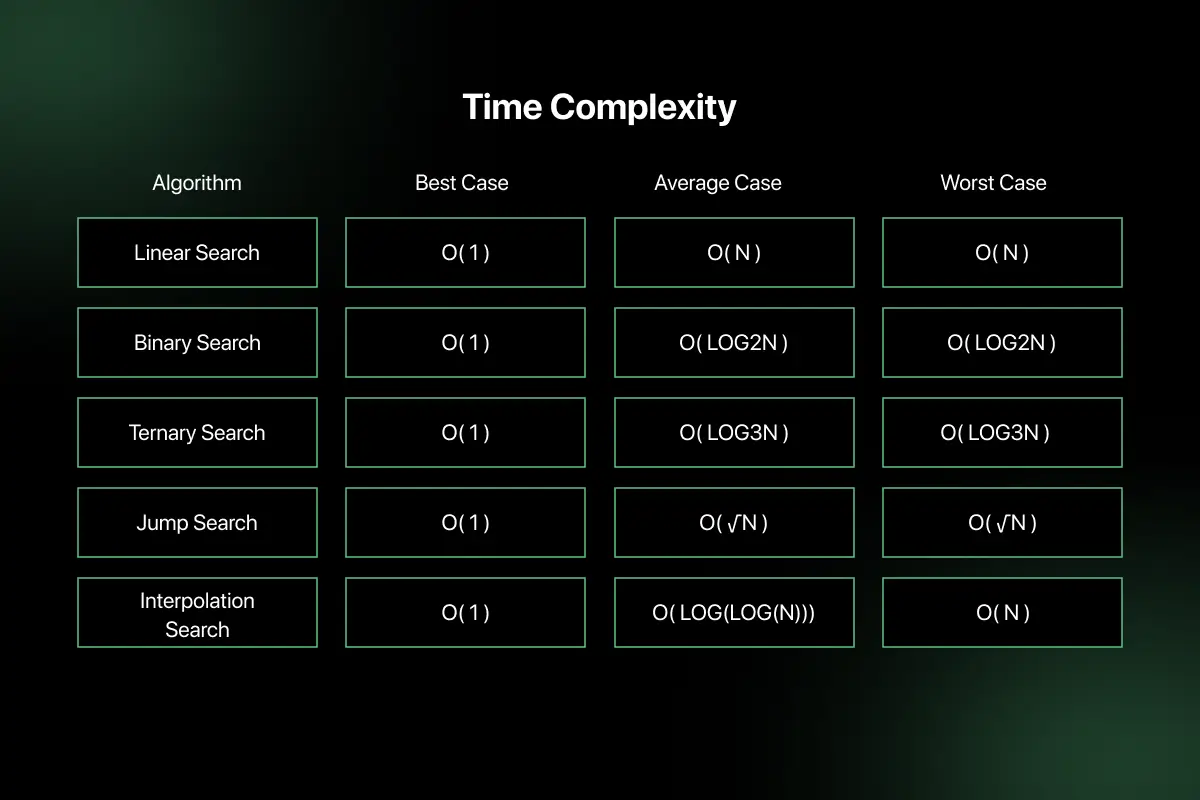

Best Case vs Average Case vs Worst Case Complexity

Time complexity can vary depending on the input condition. To better understand algorithm performance, it is analyzed under three scenarios: best case, average case, and worst case.

| Case | Meaning | Example |

| Best Case | Minimum number of operations required | Searching the first element in a list |

| Average Case | Expected number of operations for typical input | Searching an element in randomly ordered data |

| Worst Case | Maximum number of operations required | Searching for the last element or the missing element |

Common Mistakes Beginners Make in Time Complexity

- Counting operations incorrectly: Beginners often count every small operation instead of focusing on the dominant operations that grow with input size.

- Ignoring nested loops: Many assume multiple loops still result in O(n), but nested loops usually lead to O(n²) complexity.

Confusing log n with n: Logarithmic complexity grows much slower than linear complexity, but beginners often treat them as similar. - Including constants in complexity: Writing O(2n) or O(5n) instead of simplifying it to O(n) is a common mistake.

- Forgetting worst case analysis: Many analyze only the easiest scenario, while interviews usually expect worst case complexity.

- Ignoring input growth: Beginners often test with small inputs and fail to consider how the algorithm behaves with very large datasets.

Time Complexity in Technical Interviews

What interviewers check:

- Can you optimize O(n²) to O(n)

- Can you explain complexity?

- Can you compare solutions?

Common technical interview questions:

- Find complexity of the code.

- Improve the algorithm.

- Explain why the solution is optimal.

How to Improve Time Complexity Skills

Improving time complexity skills requires a combination of conceptual learning, regular practice, and understanding how optimized solutions are designed.

Some effective ways to strengthen your time complexity understanding include:

- Learn DSA fundamentals: Build a strong foundation in data structures and algorithms to understand how different approaches affect performance.

- Practice complexity-based problems: Solve problems that specifically require analyzing and improving time complexity.

- Solve interview questions regularly: Practice coding questions commonly asked in technical interviews to improve optimization thinking.

- Analyze solutions after solving: Always review optimal solutions to understand how better time complexity is achieved.

- Practice MCQs for theoretical concepts: Use MCQ tests to strengthen understanding of Big O notation, algorithm behavior, and complexity comparisons.

- Study company-specific interview patterns: Focus on complexity questions frequently asked by companies like TCS, Infosys, Accenture, and Cognizant.

For structured preparation, you can practice DSA problems and complexity questions on PlacementPreparation.io and explore guided programming courses on GUVI to strengthen your problem-solving skills.

Real World Impact of Time Complexity

- Search Engines (Google): Faster ranking algorithms help deliver relevant search results within milliseconds.

- E-commerce Platforms (Amazon): Efficient recommendation algorithms improve product suggestions and user experience.

- Banking Systems: Optimized algorithms ensure fast and secure transaction processing.

- Streaming Platforms (Netflix): Time-efficient recommendation systems help deliver personalized content instantly.

- Cloud Computing: Efficient algorithms reduce processing time, helping companies lower infrastructure and computing costs.

- Social Media Platforms: Optimized feed algorithms help platforms like Instagram and LinkedIn handle millions of user requests efficiently.

Time Complexity vs Space Complexity

Time complexity and space complexity are two important measures used to evaluate the efficiency of an algorithm. While time complexity focuses on execution speed, space complexity focuses on memory usage.

| Time Complexity | Space Complexity |

| Measures number of execution steps | Measures memory usage |

| Focuses on speed optimization | Focuses on memory optimization |

| Related to CPU performance | Related to RAM usage |

| Helps reduce execution time | Helps reduce memory consumption |

| Important for performance tuning | Important for memory-efficient design |

Final Words

Time complexity is a fundamental concept in data structures that helps developers write efficient and scalable programs. It allows engineers to compare different solutions and choose the one that performs best as data grows.

To strengthen your preparation, you can practice time complexity questions and DSA problems on PlacementPreparation.io and explore structured programming courses from GUVI to build a strong problem-solving foundation.

FAQs

Time complexity is a way to measure how the running time of an algorithm increases as the input size grows, helping developers estimate performance without measuring actual execution time.

Time complexity is important because it helps developers choose efficient algorithms, write optimized code, build scalable systems, and perform better in technical interviews where optimization matters.

Time complexity is calculated by counting how many times operations run, analyzing loops and recursion, ignoring constants, and expressing the growth rate using Big O notation.

O(n) represents linear time complexity where the number of operations increases directly with the input size, such as traversing all elements of an array.

O(log n) represents logarithmic complexity where the problem size reduces at each step, such as binary search where the search space is repeatedly divided in half.

Yes, time complexity is commonly asked in technical interviews because companies want candidates who can write efficient code and optimize solutions rather than just solving problems.

You can improve complexity skills by learning DSA fundamentals, practicing coding problems, analyzing optimal solutions, solving interview questions, and taking MCQ tests on algorithm concepts.

Time complexity measures how execution time grows with input size, while space complexity measures how much memory an algorithm uses during execution.

Related Posts

Searching Algorithm in Data Structure

Searching is one of the most fundamental operations in computer science because almost every application involves finding specific data efficiently. …

Warning: Undefined variable $post_id in /var/www/wordpress/wp-content/themes/placementpreparation/template-parts/popup-zenlite.php on line 1050