What Is Space Complexity in Data Structures?

Why do some applications crash with Out of Memory errors even when the code seems correct? Why do mobile apps need careful memory optimization to run smoothly? Why do companies pay close attention to RAM usage when building large-scale systems?

The answer often lies in space complexity, which measures how much memory an algorithm requires as the input size increases. While many developers focus only on speed, memory efficiency is equally important for building stable and scalable applications.

Understanding space complexity helps developers design memory-efficient solutions, avoid performance issues, and make better data structure choices. It is also an important concept tested in technical interviews, where candidates are expected to optimize both time and memory usage.

In this article, you will learn what space complexity means, why memory optimization matters in real systems, how it is evaluated in interviews, and how to calculate it using simple methods.

What Is Space Complexity?

Space complexity measures the total amount of memory an algorithm requires as the input size increases. Instead of focusing on the exact memory used in bytes, it evaluates how memory usage grows relative to the input.

This helps developers understand whether an algorithm will remain memory-efficient when handling large datasets.

Space complexity typically includes the following components:

- Variables: Memory used by variables, counters, and temporary values during execution.

- Data structures: Memory required for arrays, linked lists, stacks, queues, or other structures used in the algorithm.

- Recursion stack: Memory used by function calls stored in the call stack during recursive execution.

- Temporary storage: Extra memory used for intermediate calculations such as helper arrays or buffers.

Space Complexity vs Memory Usage

Many beginners assume space complexity means the exact RAM used by a program. However, space complexity focuses on growth behavior rather than exact memory consumption.

| Factor | Meaning |

| Memory usage | The actual amount of RAM consumed during execution |

| Space complexity | How memory requirements increase as input size grows |

Components of Space Complexity

Space complexity is made up of different types of memory used during the execution of an algorithm. Understanding these components helps developers identify where memory is being used and how it can be optimized.

The main components of space complexity include:

1. Fixed Space

Fixed space refers to the memory that does not change with input size. This memory remains constant regardless of how large the input becomes.

Examples:

- Variables used in the program

- Constants

- Program instructions

- Fixed-size data types

Typical complexity: O(1) because the memory requirement remains constant.

2. Variable Space

Variable space refers to memory that increases or decreases depending on the input size. This is the main factor considered in space complexity analysis.

Examples:

- Arrays that store input data

- Dynamically allocated memory

- Recursion call stack

- Data structures created based on input size

Typical complexity: Often O(n) because memory grows with input.

3. Auxiliary Space

Auxiliary space is the extra memory used by an algorithm apart from the input data. This is an important concept frequently asked in technical interviews.

Auxiliary space is the additional temporary memory required to solve a problem, excluding the memory used to store the input.

Examples:

- Temporary arrays used in merge sort

- Hash maps used for fast lookup

- Stack used in recursion

- Helper data structures

Understanding auxiliary space helps developers design memory-efficient algorithms and is often used to compare optimized solutions in interviews.

Why Space Complexity Matters in Data Structures

Space complexity is important in data structures because it helps developers design memory-efficient programs that can handle large data without performance issues or crashes.

Some key reasons why space complexity matters include:

1. Memory Optimization

Efficient memory usage is critical when applications run on devices with limited resources.

Example: Mobile applications must minimize memory usage to avoid slow performance and excessive battery consumption.

2. Handling Large Datasets

When working with large amounts of data, inefficient memory usage can lead to system slowdowns or failures.

Example: Big data applications must use memory-efficient data structures to process large volumes of information smoothly.

3. Improving System Stability

Poor memory management can cause programs to crash due to memory overflow.

Example: Applications with inefficient memory allocation may encounter Out of Memory errors when processing large inputs.

4. Interview Evaluation

Space complexity is often evaluated in technical interviews to test whether candidates can design optimized and memory-efficient solutions.

Interviewers usually check whether candidates can:

- Reduce unnecessary memory usage

- Choose appropriate data structures

- Optimize auxiliary space

- Balance time and space tradeoffs

Understanding space complexity helps developers write stable applications and perform better in technical interviews.

Space Complexity of Common Data Structures

Different data structures require different amounts of memory depending on how they store and manage data. Understanding their space complexity helps developers choose structures that balance memory usage and performance.

The following table shows the typical space complexity of commonly used data structures:

| Data Structure | Memory Usage |

| Array | O(n) |

| Linked List | O(n) + extra memory for pointers |

| Stack | O(n) |

| Queue | O(n) |

| Hash Table | O(n) |

| Binary Tree | O(n) |

| Graph | O(V + E) |

Here, V represents the number of vertices and E represents the number of edges in a graph.

How to Calculate Space Complexity

Space complexity can be calculated by identifying how much extra memory an algorithm uses apart from the input.

Following a structured method makes it easier to analyze memory usage correctly.

Create a copy of an array.

Step-by-Step Analysis

- Step 1: Identify input size: The input size is n because the algorithm processes n elements.

- Step 2: Identify extra memory: The algorithm creates a new array temp[n], which requires memory proportional to n.

- Step 3: Ignore constants: Variables like i or fixed variables use constant memory and can be ignored.

- Step 4: Consider the recursion stack: There is no recursion in this example, so no extra stack memory is used.

- Step 5: Express using Big O: The extra memory used is proportional to n.

Result Summary

| Factor | Result |

| Input size | n |

| Extra array | n elements |

| Variables | Constant space |

| Recursion memory | Not used |

| Space Complexity | O(n) |

Following this approach helps developers identify whether an algorithm is memory efficient, and it is a common method used during technical interviews.

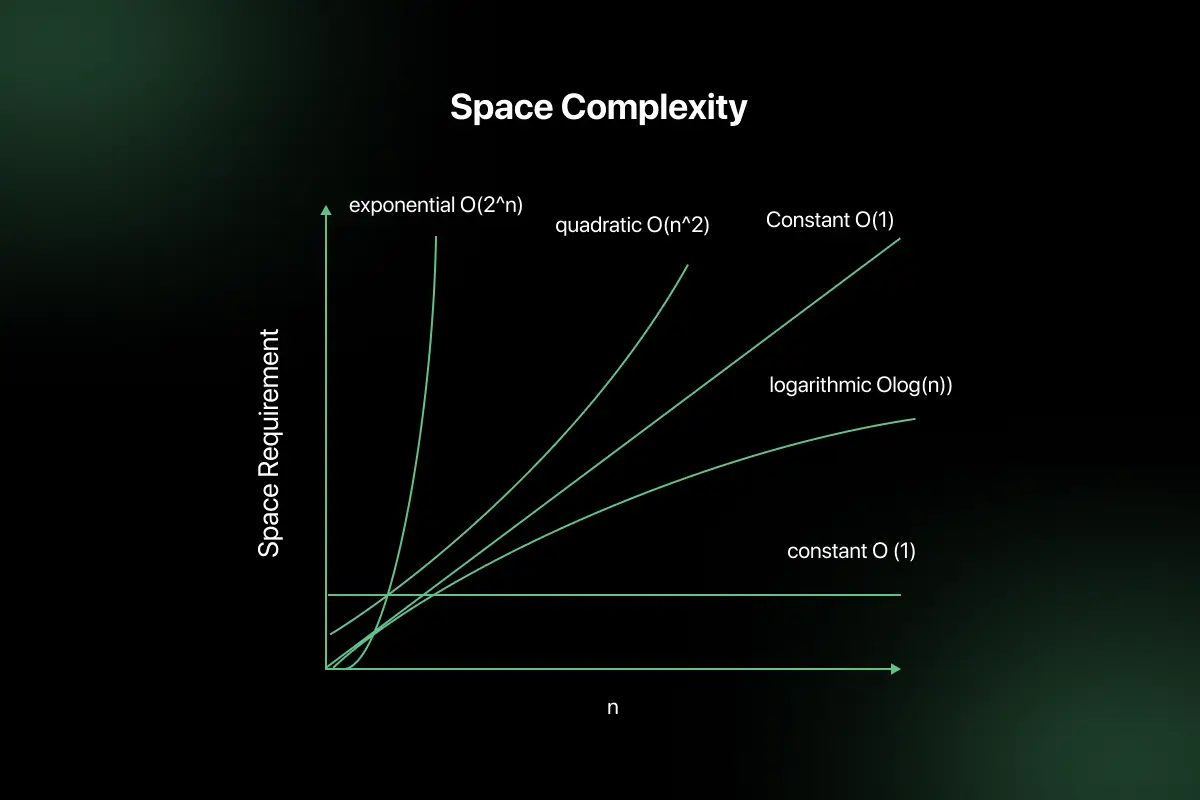

Space Complexity Analysis of Code Patterns

Instead of memorizing space complexity for different programs, it is easier to identify common coding patterns and understand how much memory they typically use. Recognizing these patterns helps quickly estimate space complexity during coding and interviews.

1. Simple Variable Usage

When an algorithm uses only a few variables and does not allocate extra memory based on input size, the space complexity remains constant.

Example:

- Using counters or temporary variables

- Swapping two numbers using a variable

Typical space complexity: O(1) because memory does not grow with input size.

2. Array Allocation

When an algorithm creates a new array based on input size, the memory requirement increases proportionally.

Example:

- Creating a copy of an array

- Storing intermediate results in another array

Typical space complexity: O(n) because memory grows with the number of elements.

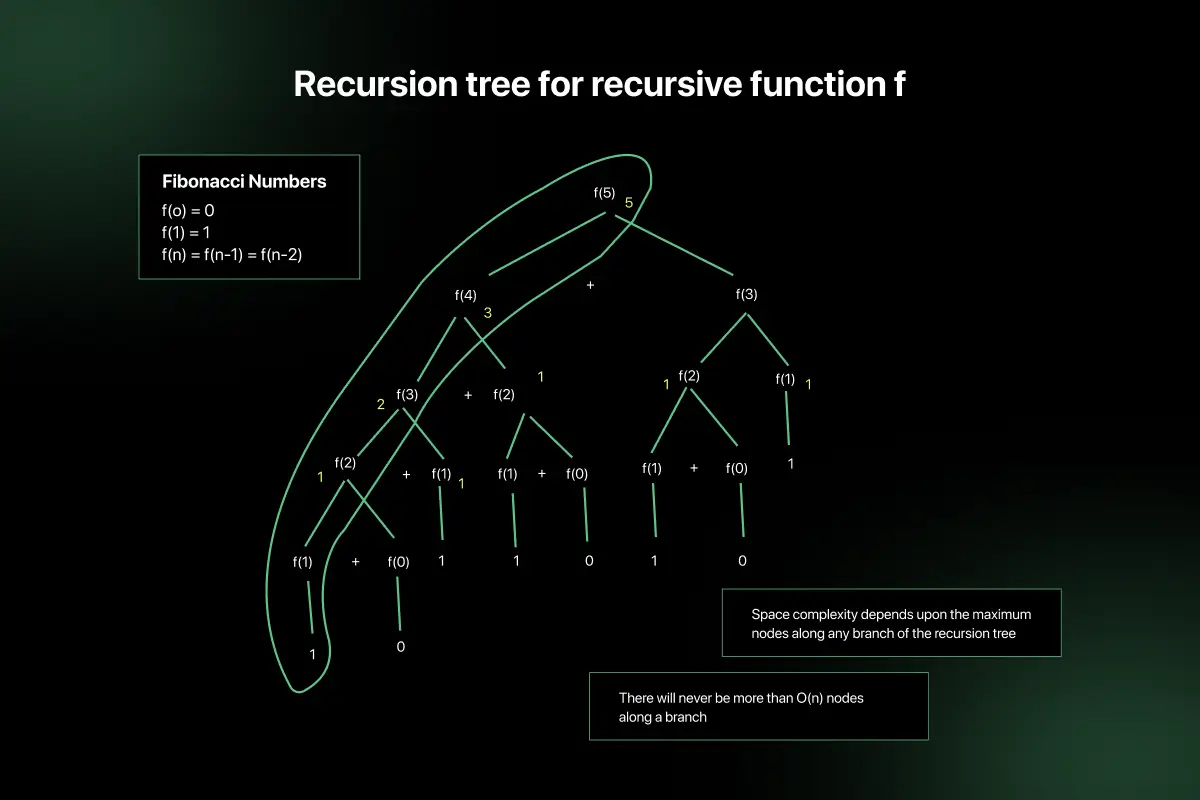

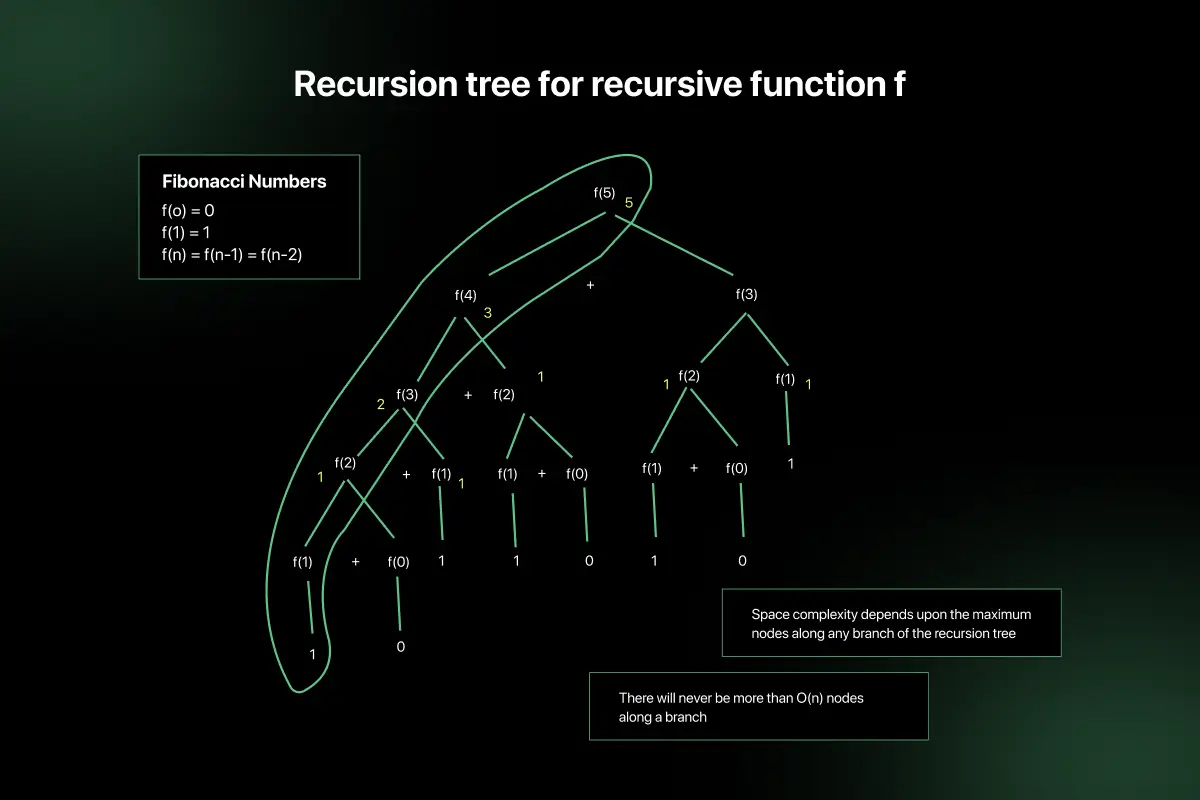

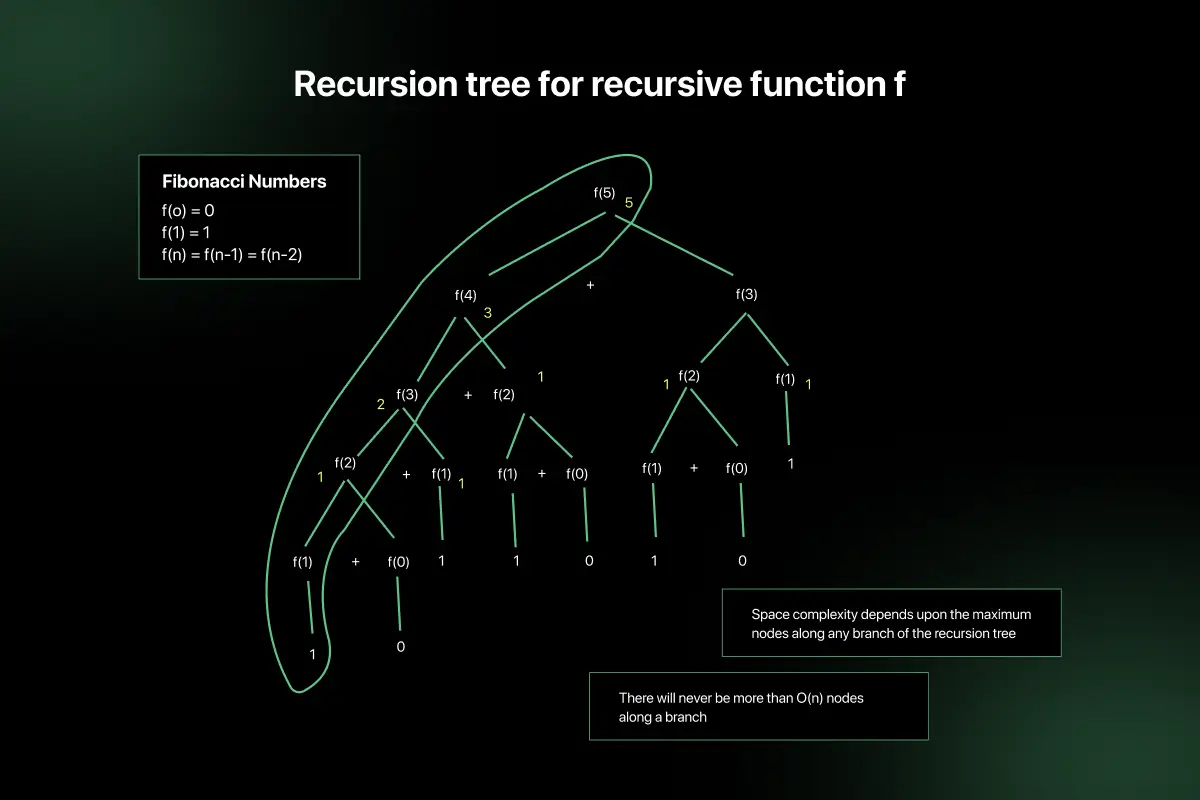

3. Recursion Pattern

Recursive algorithms use memory in the function call stack. Each recursive call adds a new stack frame, increasing memory usage.

Example:

- Recursive factorial calculation

- Recursive tree traversal

Typical space complexity: Often O(n) depending on recursion depth.

4. Hash Structures

Using hash maps or hash sets requires additional memory to store key-value pairs or elements.

Example:

- Using a HashMap for fast lookup

- Detecting duplicates using a HashSet

Typical space complexity: O(n) because additional storage depends on input size.

Recognizing these patterns helps developers quickly evaluate memory usage without detailed calculation.

Time Complexity vs Space Complexity

| Time Complexity | Space Complexity |

| Measures how execution time grows with input size | Measures how memory usage grows with input size |

| Focuses on algorithm speed | Focuses on memory consumption |

| Helps optimize performance | Helps optimize memory usage |

| Related to the number of operations | Related to the amount of storage required |

| Important for fast execution | Important for memory-efficient programs |

| Example: O(n), O(log n), O(n²) | Example: O(1), O(n) auxiliary space |

| Evaluated in coding interviews for optimization | Evaluated to check memory efficiency |

Common Mistakes Beginners Make in Space Complexity

Ignoring recursion memory: Forgetting that each recursive call uses stack memory.

- Forgetting auxiliary space: Not counting extra memory like temporary arrays or hash maps.

- Counting input memory incorrectly: Including input storage when only extra memory should be analyzed.

- Confusing time and space complexity: Mixing execution speed with memory usage.

- Ignoring data structure overhead: Not considering extra memory used by pointers or references.

- Assuming variables increase space: Small fixed variables usually count as O(1), not O(n).

Space Complexity in Technical Interviews

Why interviewers ask:

- Memory optimization ability

- Efficient data structure choice

- Tradeoff decisions

Common technical interview questions:

- Reduce memory usage.

- Optimize recursion.

- Replace the extra array.

- Improve space complexity.

How to Improve Space Optimization Skills

You can improve space optimization skills by focusing on memory-efficient coding practices and understanding how algorithms use extra memory.

Some simple ways to improve include:

- Learn DSA memory concepts: Understand how different data structures use memory.

- Practice coding problems: Solve problems that involve reducing extra space usage.

- Analyze optimal solutions: Study how efficient solutions reduce auxiliary space.

- Study company interview patterns: Focus on memory optimization questions asked in technical interviews.

- Practice MCQs: Test your theoretical understanding of space complexity concepts.

- Understand recursion memory: Learn how recursive calls use stack space and how to optimize them.

Regular practice and reviewing optimized solutions can help you write more memory-efficient programs.

Real World Use Cases of Space Complexity

- Mobile applications: Helps reduce memory usage so apps run smoothly on devices with limited RAM.

- Artificial Intelligence: Used to manage model memory and optimize large datasets.

- Cloud computing: Helps reduce RAM usage, lowering infrastructure costs.

- Gaming systems: Ensures efficient memory usage for better performance and smooth gameplay.

- FinTech applications: Helps manage transaction data efficiently without memory overflow.

- Database systems: Optimizes memory usage for faster data processing and storage.

Final Words

Space complexity is an important concept that helps developers design memory-efficient algorithms and prevent performance issues caused by excessive memory usage. Understanding how algorithms use memory is essential when working with large datasets and building scalable applications.

It is also an important topic in technical interviews where candidates are expected to optimize both time and space. Regular practice of DSA problems, memory optimization techniques, and interview questions can help strengthen this skill.

FAQs

Space complexity measures how much memory an algorithm needs as the input size increases. It includes memory used by variables, data structures, recursion, and temporary storage.

Auxiliary space refers to the extra memory used by an algorithm apart from the input data. This includes temporary arrays, stacks, hash maps, or helper variables.

Space complexity is calculated by identifying extra memory used, ignoring constant variables, considering recursion stack usage, and expressing the total memory growth using Big O notation.

Time complexity measures how execution time grows with input size, while space complexity measures how memory usage grows. One focuses on speed, the other on memory efficiency.

Yes, space complexity is often asked in technical interviews to test whether candidates can design memory-efficient solutions and understand time–space optimization tradeoffs.

Space complexity can be reduced by avoiding unnecessary data structures, reusing variables, optimizing recursion, using in-place algorithms, and choosing memory-efficient data structures.

Memory optimization prevents application crashes, improves performance, reduces infrastructure costs, and helps systems handle large datasets efficiently without excessive resource consumption.

O(1) space complexity means an algorithm uses constant memory regardless of input size, such as using only a few fixed variables without allocating extra data structures.

Related Posts

Searching Algorithm in Data Structure

Searching is one of the most fundamental operations in computer science because almost every application involves finding specific data efficiently. …

Warning: Undefined variable $post_id in /var/www/wordpress/wp-content/themes/placementpreparation/template-parts/popup-zenlite.php on line 1050