Time complexities of different data structures

Choosing the wrong data structure can make a program slow, inefficient, and difficult to scale. For example, using an array where frequent insertions are needed can increase execution time, while a better choice, like a linked list, could improve performance.

Companies focus heavily on operational efficiency because real systems handle millions of requests, and even small inefficiencies can lead to performance bottlenecks. This is why understanding the time complexity of different data structures is a core skill in software engineering and technical interviews.

In this article, you will learn the time complexity of major data structures, how different operations like search, insert, and delete compare, and why this knowledge is important for DSA preparation and coding interviews.

What Time Complexity Means in Data Structures

In data structures, efficiency depends on how fast operations can be performed, not just on the structure itself. The same data structure can perform differently depending on the operation being executed.

Time complexity is usually analyzed based on these core operations:

- Access: Retrieving an element using its index or position.

- Search: Finding an element based on its value.

- Insert: Adding a new element into the data structure.

- Delete: Removing an existing element from the data structure.

Understanding how quickly these operations work helps developers choose the right data structure for performance-critical applications.

Time Complexity of Linear Data Structures

1. Array Time Complexity Table

| Operation | Time Complexity |

| Access | O(1) |

| Search | O(n) |

| Insert | O(n) |

| Delete | O(n) |

Arrays allow fast access using indexing, but insertion and deletion are slower because elements may need to be shifted.

2. Linked List Time Complexity

| Operation | Time Complexity |

| Access | O(n) |

| Search | O(n) |

| Insert | O(1) |

| Delete | O(1) |

Linked lists allow fast insertion and deletion because pointers can be updated easily, but access and search are slow since elements must be traversed sequentially.

3. Stack Time Complexity

| Operation | Time Complexity |

| Push | O(1) |

| Pop | O(1) |

| Peek | O(1) |

| Search | O(n) |

Stack follows the LIFO (Last In First Out) principle, allowing fast push and pop operations but requiring linear time to search elements.

4. Queue Time Complexity

| Operation | Time Complexity |

| Enqueue | O(1) |

| Dequeue | O(1) |

| Peek | O(1) |

| Search | O(n) |

Queue follows the FIFO (First In First Out) principle, allowing efficient insertion and removal from opposite ends, while searching still requires traversal.

Time Complexity of Non-Linear Data Structures

1. Hash Table Complexity

| Operation | Average | Worst |

| Search | O(1) | O(n) |

| Insert | O(1) | O(n) |

| Delete | O(1) | O(n) |

Hash tables provide very fast operations on average, but collisions (when multiple keys map to the same index) can degrade performance to O(n) in the worst case.

2. Binary Tree Complexity

| Operation | Time Complexity |

| Search | O(n) |

| Insert | O(n) |

| Delete | O(n) |

The performance depends on the shape of the tree. If the tree becomes skewed (like a linked list), operations may require traversing all nodes.

3. Binary Search Tree (BST)

| Operation | Average | Worst |

| Search | O(log n) | O(n) |

| Insert | O(log n) | O(n) |

| Delete | O(log n) | O(n) |

BST operations are fast when the tree is balanced, but an unbalanced tree (skewed structure) can degrade performance to O(n).

4. Heap Complexity

| Operation | Time Complexity |

| Insert | O(log n) |

| Delete | O(log n) |

| Peek | O(1) |

Heaps maintain a specific order (min-heap or max-heap), allowing fast access to the top element while insertions and deletions require re-heapification.

5. Graph Complexity

| Operation | Time Complexity |

| Add vertex | O(1) |

| Add edge | O(1) |

| Traversal | O(V + E) |

Graph traversal depends on the number of vertices (V) and edges (E), since algorithms like BFS and DFS may need to visit every node and connection.

Quick Comparison Table of All Data Structures

| Data Structure | Access | Search | Insert | Delete |

| Array | O(1) | O(n) | O(n) | O(n) |

| Linked List | O(n) | O(n) | O(1) | O(1) |

| Stack | O(n) | O(n) | O(1) | O(1) |

| Queue | O(n) | O(n) | O(1) | O(1) |

| Hash Table | N/A | O(1) | O(1) | O(1) |

| BST | O(log n) | O(log n) | O(log n) | O(log n) |

This comparison helps quickly identify which data structure performs best for specific operations and is commonly asked in DSA interviews.

Why Time Complexity Comparison Matters

- Choose the correct data structure: Helps select the most efficient structure based on operation needs.

- Improve performance: Faster operations lead to better application responsiveness.

- Optimize large systems: Efficient structures handle large datasets without slowing down.

- Required for interviews: Frequently tested in DSA and coding interviews.

- Helps design scalable software: Ensures applications perform well as user data grows.

How Interviewers Use This Topic

Understanding the time complexity of data structures is a common requirement in technical interviews because companies want candidates who can choose efficient solutions, not just working solutions.

Common Interview Questions

Companies often ask questions such as:

- Which data structure is fastest for search operations?

- Compare Array vs Linked List performance.

- Why is HashMap average time complexity O(1)?

- When should you use BST instead of HashMap?

- Which data structure is best for frequent insertions?

Preparation Tips

To prepare well for these questions:

- Practice DSA problems: Solve problems involving arrays, linked lists, trees, and hash tables.

- Study operation complexities: Memorize and understand time complexities of common data structures.

- Solve MCQs: Practice theoretical questions on algorithms and data structures.

- Practice company questions: Focus on interview patterns from companies like TCS, Infosys, Accenture, and Cognizant.

For structured preparation, you can practice DSA questions, MCQs, and company-specific interview problems on PlacementPreparation.io to strengthen your placement readiness.

Final Words

Time complexity plays a key role in determining how efficiently a data structure performs under different operations. Since each data structure has strengths and limitations, understanding its operation-wise performance helps in choosing the right one for a given problem.

This comparison is also important for technical interviews, where candidates are expected to justify their data structure choices based on efficiency. Regular practice of DSA problems, complexity analysis, and interview questions can help build strong problem-solving skills.

FAQs

Array access takes O(1), while search, insertion, and deletion usually take O(n) because elements may need shifting or full traversal.

No single data structure is fastest for all operations. Hash tables are fast for search, arrays for access, and linked lists for insertion and deletion.

HashMap uses hashing to directly map keys to indices, allowing average O(1) search, insert, and delete, though collisions can cause O(n) worst case.

Arrays are better for fast access, while linked lists are better for frequent insertions and deletions. The choice depends on the use case.

Hash tables provide the fastest average search time O(1), while balanced BSTs provide O(log n) search when sorted data is needed.

A balanced BST reduces the search space by half at each step, similar to binary search, resulting in O(log n) average complexity.

Practice DSA regularly, revise comparison tables, solve MCQs, and apply data structures in coding problems to remember their time complexities naturally.

Related Posts

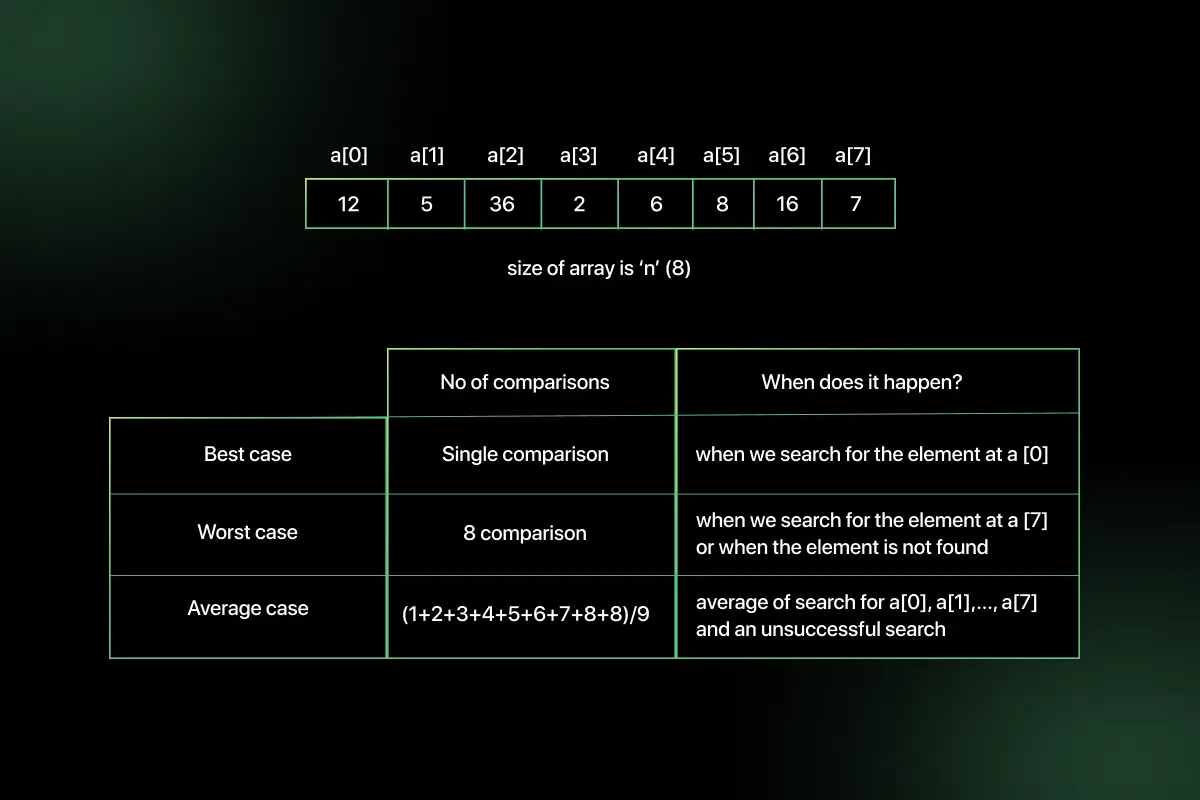

Searching Algorithm in Data Structure

Searching is one of the most fundamental operations in computer science because almost every application involves finding specific data efficiently. …

Warning: Undefined variable $post_id in /var/www/wordpress/wp-content/themes/placementpreparation/template-parts/popup-zenlite.php on line 1050