Big O Notation in Data Structure

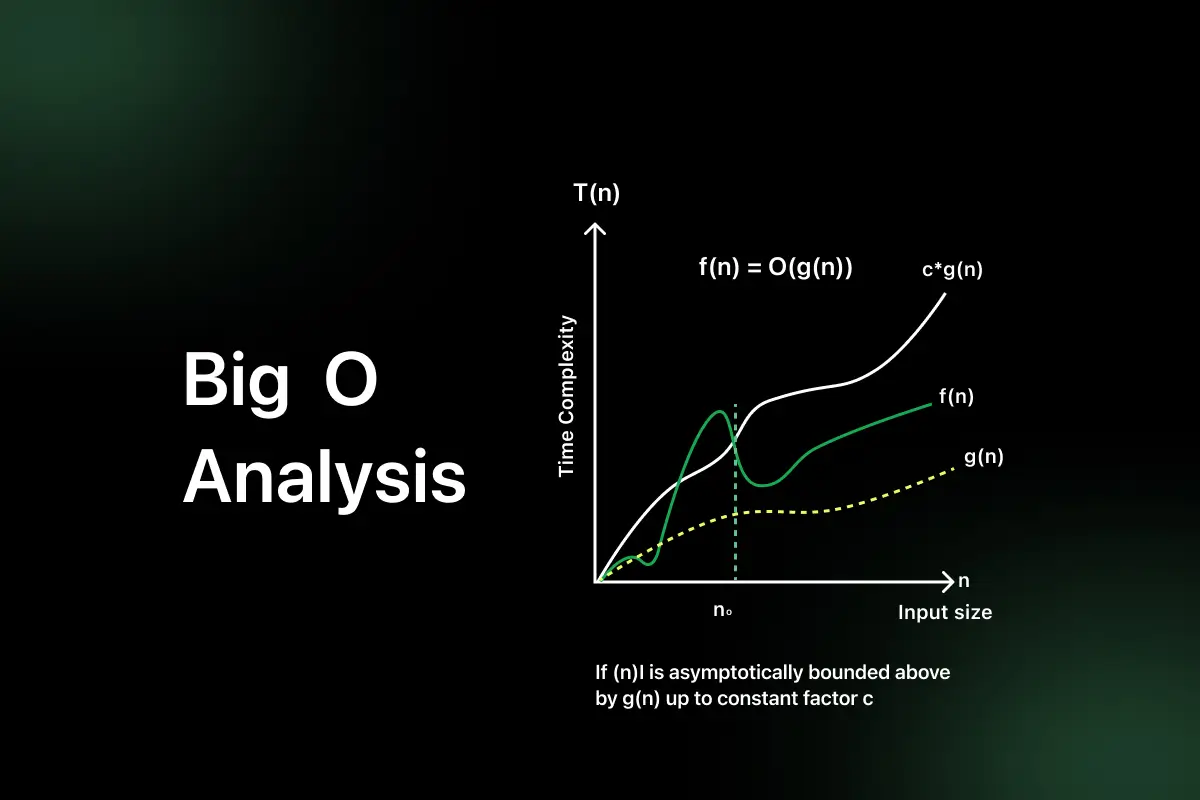

As data grows, the performance of an algorithm becomes critical because inefficient solutions can slow down applications and systems. This is why Big O notation in data structures is important, as it helps measure how an algorithm scales with increasing input size.

Big O is also a common topic in coding interviews because it shows how well you understand algorithm efficiency and optimization. Developers also use the time complexity Big O in system design to choose efficient approaches for handling large amounts of data.

After reading this article, you will understand algorithm complexity, identify basic Big O patterns, compare algorithm performance, and recognize complexity in interview problems.

Why Big O Notation Matters in Real Programming

- Predict performance at scale: Big O helps developers understand how an algorithm performs as data size increases, which is important when applications handle thousands or millions of records.

- Compare different solutions: Developers use Big O to compare multiple approaches and choose the most efficient one instead of relying on guesswork.

- Avoid slow systems: Understanding complexity helps prevent performance bottlenecks that can slow down applications as usage grows.

- Used in database and backend design: Backend systems and databases rely on efficient algorithms to process queries quickly and manage large datasets.

Real example: Searching 10 records may feel instant with any algorithm, but searching 1 million records shows the real difference between O(n) and O(log n) performance.

Understanding Growth Rate Instead of Exact Time

The exact time an algorithm takes depends on hardware, programming language, compiler optimizations, and system load. Because of this, measuring performance only in seconds is not reliable.

- Why growth rate matters more than seconds: Big O focuses on how the number of operations increases as input size grows. This gives a better idea of long-term performance rather than short-term execution time.

- Hardware-independent analysis: Big O notation measures algorithm efficiency based on input size, not machine speed. This allows developers to compare algorithms fairly across different systems.

Practical idea: An algorithm taking 1 second for 100 inputs may take much longer for 1 million inputs if its growth rate is poor.

Common Big O Complexities You Must Know

Understanding common Big O complexities helps you quickly estimate how an algorithm will perform as the input size increases.

Below are the most important complexity types every DSA learner should know.

| Complexity | Name | Explanation | Real world – Example |

| O(1) | Constant | Execution time does not change with input size | Accessing an array element |

| O(log n) | Logarithmic | Time increases slowly as input grows | Binary Search |

| O(n) | Linear | Time increases directly with input size | Linear Search |

| O(n log n) | Linearithmic | Efficient complexity used in advanced sorting | Merge Sort, Quick Sort |

| O(n²) | Quadratic | Time increases rapidly due to nested loops | Bubble Sort, Selection Sort |

| O(2ⁿ) | Exponential | Time doubles with each added input | Recursive Fibonacci |

Big O in Common Data Structure Operations

| Data Structure | Access | Search | Insert | Delete |

| Array | O(1) | O(n) | O(n) | O(n) |

| Linked List | O(n) | O(n) | O(1) | O(1) |

| Stack | O(n) | O(n) | O(1) | O(1) |

| Queue | O(n) | O(n) | O(1) | O(1) |

| Hash Table | O(1) | O(1) | O(1) | O(1) |

How to Calculate Big O Complexity (Simple Method)

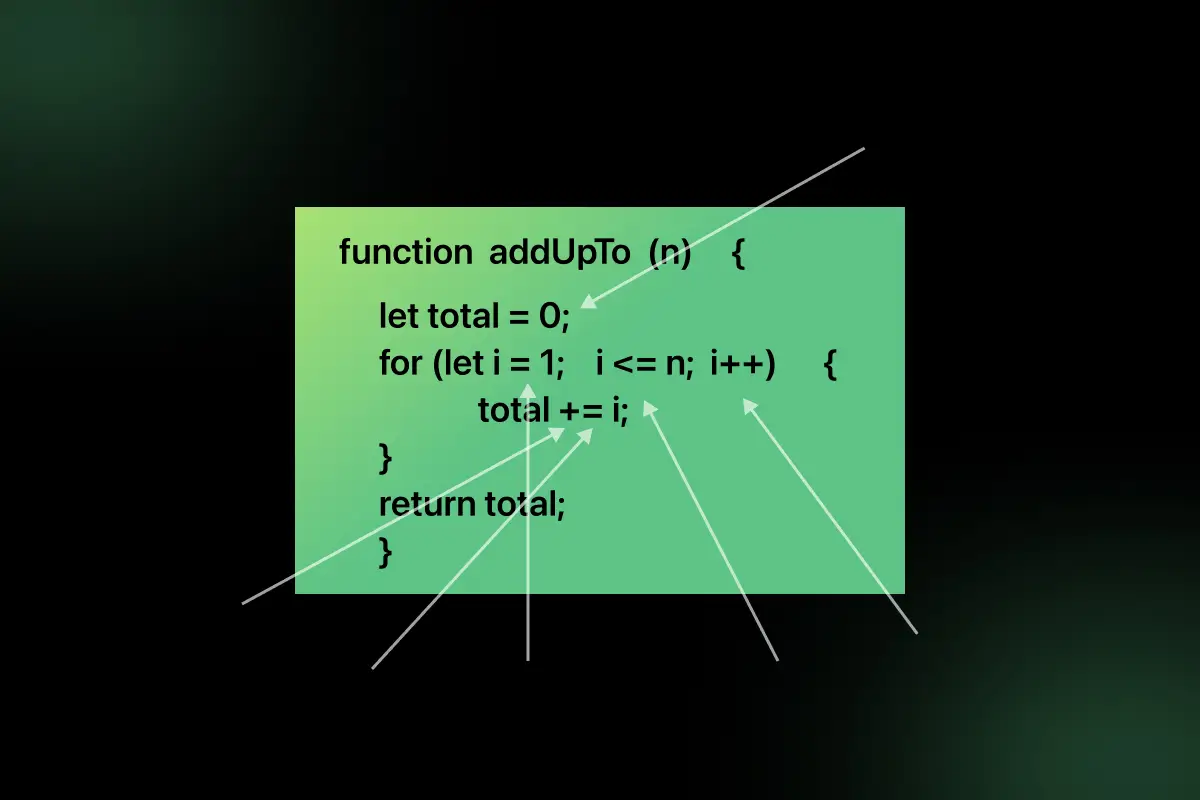

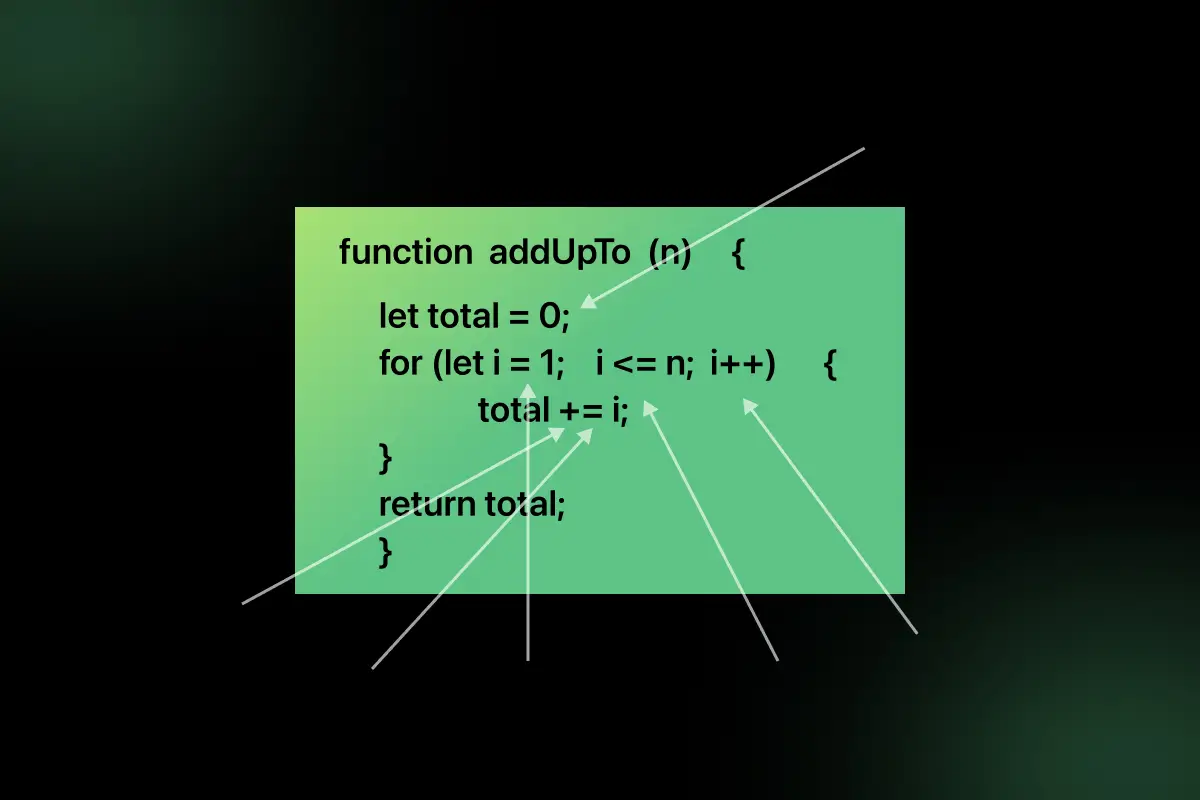

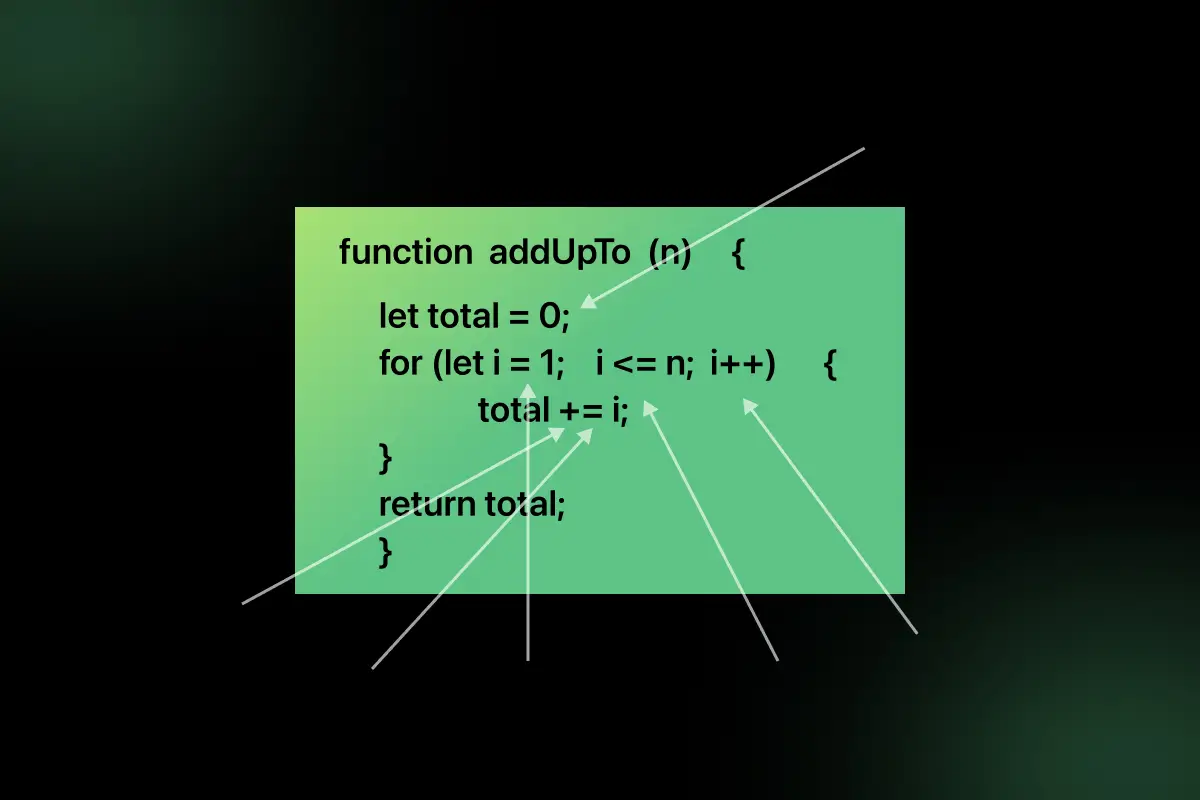

You can calculate Big O complexity by following a few simple rules instead of complex mathematical analysis.

1. Ignore constants: Constant operations do not affect growth rate.

Example: If an algorithm takes 5n operations, it is considered O(n).

2. Focus on the dominant term: When multiple terms exist, only the fastest-growing term matters.

Example: O(n² + n) becomes O(n²).

3. Count loops: A single loop usually gives O(n) complexity.

Example:

for i in range(n):

print(i)

Complexity = O(n)

- Check nested loops

- Nested loops multiply complexity.

Example:

for i in range(n):

for j in range(n):

print(i,j)

Complexity = O(n²)

- Simple rule:

- Single loop → O(n)

- Nested loop → O(n²)

- Divide problem → O(log n)

Why Engineers Focus on Worst Case Complexity

- Performance guarantee: Worst-case complexity shows the maximum time an algorithm can take, helping engineers ensure the system will perform within acceptable limits even in difficult scenarios.

- Scalability safety: Systems often deal with unpredictable data sizes. Worst case analysis ensures the application will still perform reliably when data grows significantly.

- System reliability: Engineers design systems based on worst-case behavior to avoid unexpected slowdowns that could impact user experience or system stability.

- Interview expectations: In technical interviews, candidates are often expected to discuss worst-case complexity because it reflects the true scalability and robustness of an algorithm.

Common Mistakes When Learning Big O

- Confusing time with number of steps: Big O does not measure actual execution time in seconds. It measures how the number of operations grows with input size.

- Ignoring the dominant term: Beginners often include all terms in complexity. For example, O(n² + n) should be simplified to O(n²).

- Thinking Big O shows exact time: Big O only shows growth rate, not exact runtime. Two O(n) algorithms can still have different execution speeds.

- Ignoring nested loops: Many learners forget that nested loops multiply complexity. Two nested loops usually result in O(n²), not O(n).

Interview Questions Based on Big O

- What is Big O?

- Difference O(n) vs O(log n)?

- How to calculate complexity?

- Why worst case matter?

How Learning Big O Improves Problem Solving

- Helps choose better algorithms: Understanding Big O allows you to compare multiple approaches and select the most efficient algorithm instead of choosing solutions randomly.

- Improves optimization thinking: Learning complexity analysis trains you to think about reducing unnecessary operations and improving performance, which is a key skill in software development.

- Helps crack coding interviews: Many interview problems require candidates to analyze and improve algorithm efficiency. Knowing Big O helps you justify why your solution is optimal.

- Builds system thinking: Big O helps developers think about scalability and performance, which is important when designing systems that handle large amounts of data.

Final Words

Big O notation helps measure how efficiently an algorithm performs as data grows. Understanding complexity improves your ability to choose better coding solutions and optimize performance. Regular practice in analyzing algorithms will strengthen your DSA and interview preparation.

FAQs

Big O notation measures how the time or space required by an algorithm grows as the input size increases.

Big O helps compare algorithms, predict performance, and choose efficient solutions for large datasets and interviews.

O(n) grows linearly with input size, while O(log n) grows much slower because the problem size reduces each step.

Big O is calculated by counting loops, ignoring constants, and focusing on the highest growing term.

Big O often represents worst case growth, but it can also describe general upper bounds depending on analysis.

Related Posts

Searching Algorithm in Data Structure

Searching is one of the most fundamental operations in computer science because almost every application involves finding specific data efficiently. …

Warning: Undefined variable $post_id in /var/www/wordpress/wp-content/themes/placementpreparation/template-parts/popup-zenlite.php on line 1050