Kubernetes Architecture Explained

Modern applications are increasingly built using containers to ensure consistency across development, testing, and production environments. Containers make applications lightweight, portable, and easier to deploy across different systems.

However, managing hundreds or thousands of containers manually becomes complex as applications scale. Automation is required for handling deployment, scaling, networking, and failure recovery.

Kubernetes architecture provides container orchestration by automating deployment, scaling, load balancing, and self-healing of containerized applications.

In this guide, you will clearly understand the components of Kubernetes, the workflow, and real-world use cases in modern cloud-native systems.

What is Kubernetes Architecture?

Kubernetes architecture is a cluster-based container orchestration system that automates the deployment, scaling, and management of containerized projects.

- Container Orchestration Platform: Kubernetes acts as an orchestration platform that manages multiple containers across different machines, ensuring they run reliably and efficiently.

- Cluster-Based System: It operates as a cluster of nodes working together. These nodes are grouped to provide high availability, scalability, and fault tolerance.

- Manages Deployment, Scaling, and Networking: Kubernetes automatically deploys containers, scales them based on demand, manages internal networking, and ensures service availability.

- Built Around Control Plane and Worker Nodes: The architecture is divided into a control plane that manages the cluster and worker nodes that run application containers.

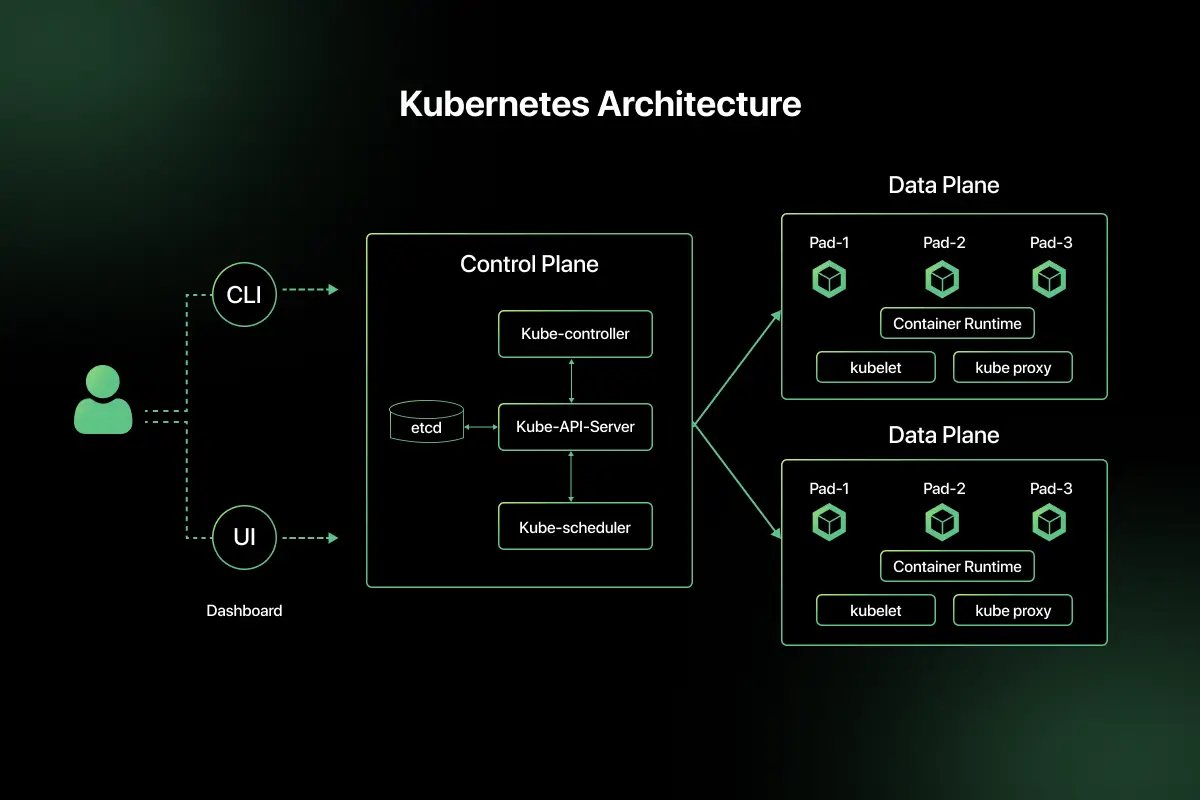

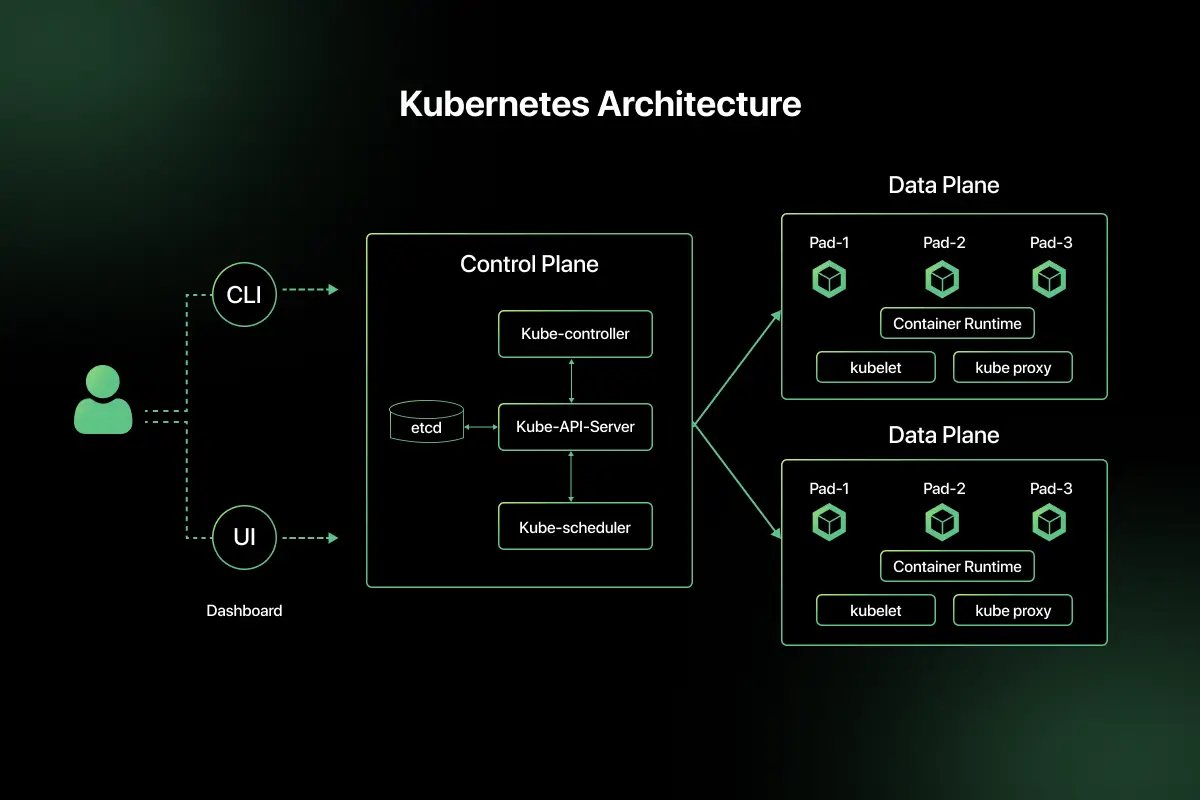

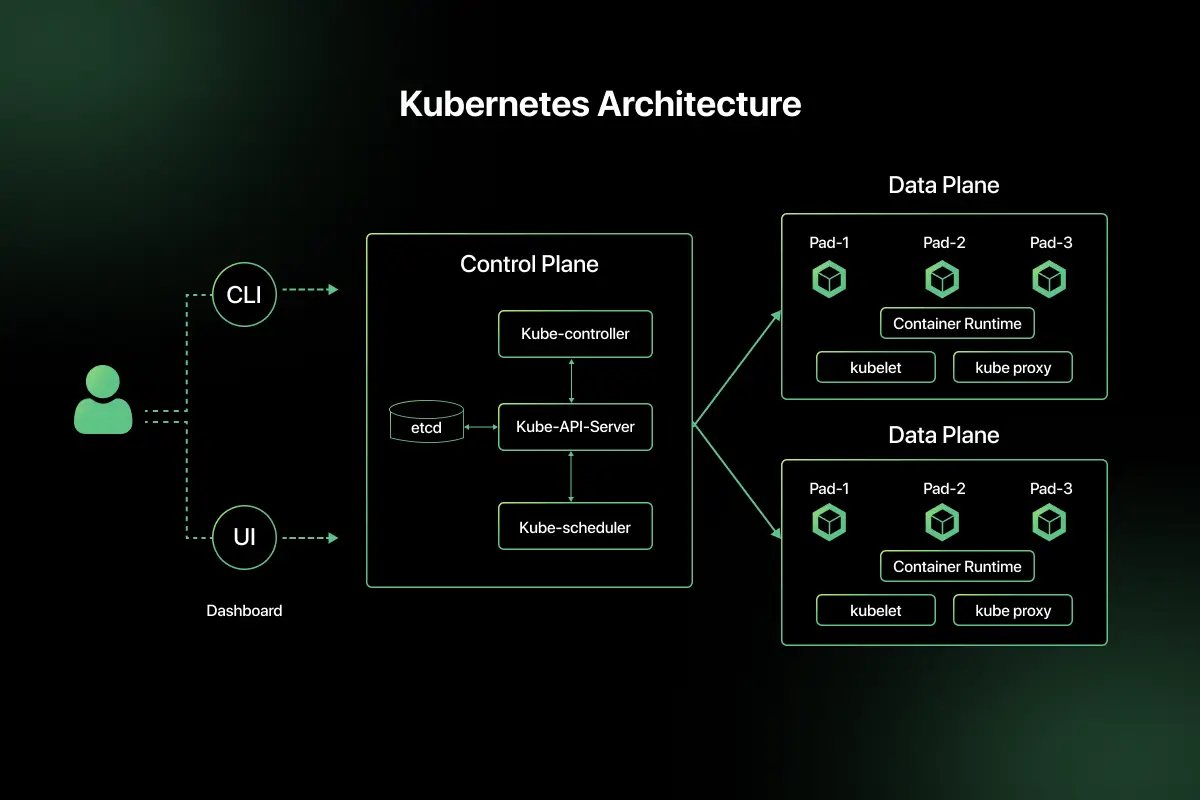

Overall Structure of Kubernetes Architecture

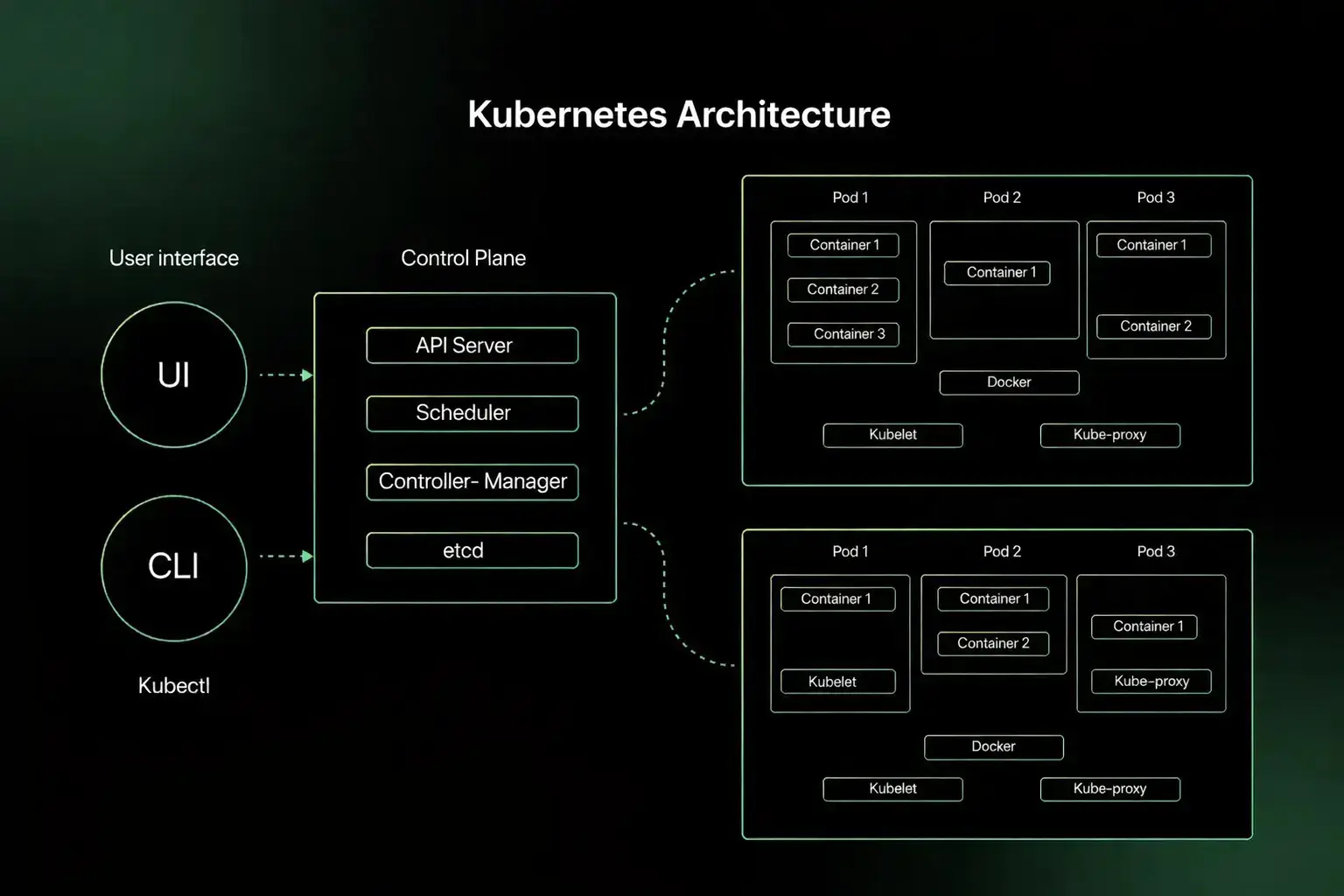

Kubernetes architecture is divided into two main parts that work together to manage containerized applications within a cluster.

- Control Plane: The control plane is the management layer of Kubernetes. It makes global decisions about the cluster, such as scheduling containers, monitoring node health, and maintaining the desired system state. It ensures that the cluster operates according to defined configurations.

- Worker Nodes: Worker nodes are the machines where application containers actually run. Each worker node hosts pods and executes workloads assigned by the control plane. They handle container runtime, networking, and communication within the cluster.

Components of the Kubernetes Control Plane

The control plane manages the overall state of the Kubernetes cluster. It ensures that applications run as expected and resources are allocated properly.

- API Server: The API Server is the central communication hub of Kubernetes. It receives and processes requests from users, administrators, and internal components, and exposes the Kubernetes API.

- etcd: etcd is a distributed key-value store that stores the entire cluster configuration and state. It acts as the primary data storage for Kubernetes.

- Controller Manager: The Controller Manager runs background controllers that monitor the cluster state. If the actual state differs from the desired state, it takes corrective actions automatically.

- Scheduler: The Scheduler assigns newly created pods to appropriate worker nodes. It considers resource availability, constraints, and policies before making placement decisions.

Components of Worker Nodes

Worker nodes are responsible for running application workloads in a Kubernetes cluster. Each node contains components that manage containers and networking.

- Kubelet: Kubelet is an agent that runs on every worker node. It communicates with the control plane and ensures that containers in assigned pods are running correctly.

- Kube Proxy: Kube Proxy manages networking rules on the node. It enables communication between pods and handles internal load balancing for services.

- Container Runtime: The container runtime is the software responsible for running containers. It pulls container images and executes them inside pods. Examples include containerd and other runtime engines.

- Pods: A pod is the smallest deployable unit in Kubernetes. It contains one or more containers that share the same network and storage resources within the node.

Kubernetes Architecture Diagram and Working Flow

In this section, learn how the Kubernetes architecture works when deploying a containerized application in a cluster.

Scenario: A developer deploys a containerized web application to a Kubernetes cluster.

- User submits deployment request: The user applies a deployment configuration file using a command such as kubectl apply. The request is sent to the Kubernetes API Server.

- API Server validates: The API Server validates the request, checks permissions, and stores the desired state of the application in etcd.

- Scheduler assigns node: The Scheduler evaluates available worker nodes based on resource capacity and policies. It assigns the pod to the most suitable node.

- Kubelet creates Pod: The Kubelet on the selected worker node receives instructions from the control plane and creates the pod. It pulls the required container image if not already available.

- Container runs: The container runtime starts the container inside the pod. The application becomes accessible within the cluster or externally through a service.

Kubernetes Objects in Architecture

Kubernetes architecture uses objects to define, manage, and control application workloads within a cluster. Each object plays a specific role in maintaining the desired system state.

- Pod: A Pod is the smallest deployable unit in Kubernetes. It contains one or more containers that share the same network and storage within a worker node.

- Deployment: A Deployment defines how pods should be created and updated. It manages rolling updates and ensures the desired number of application instances are running.

- Service: A Service provides a stable network endpoint to access a group of pods. It enables communication between components inside or outside the cluster.

- ReplicaSet: A ReplicaSet ensures a specified number of identical pod replicas are running at all times. It maintains application availability.

- Namespace: A Namespace is used to logically separate resources within a cluster. It helps organize and manage applications in large environments.

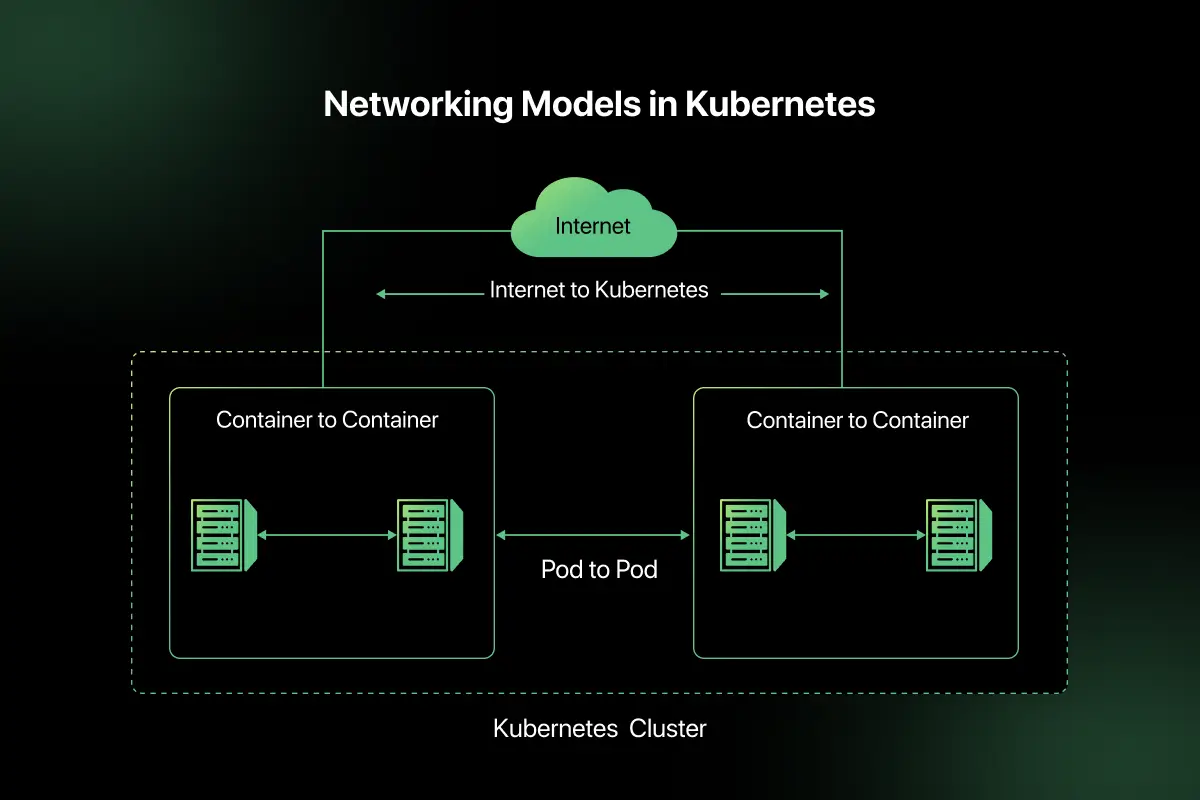

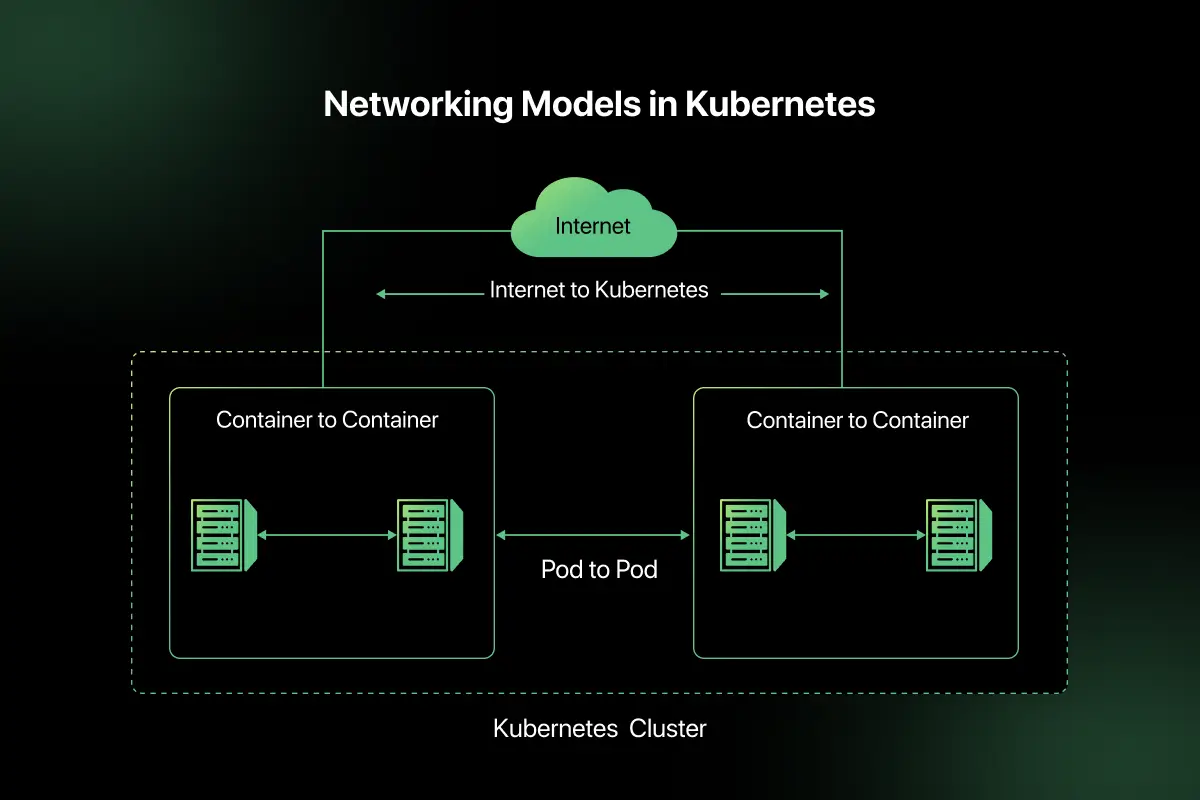

Kubernetes Networking Model

Kubernetes provides a built-in networking model that enables seamless communication between pods and services within a cluster.

Pod to Pod Communication: In Kubernetes, every pod gets its own IP address. Pods can communicate directly with each other across nodes without network address translation. This simplifies internal communication within the cluster.

Service Abstraction: A Service acts as a stable abstraction layer over a group of pods. Since pod IP addresses can change, the Service provides a consistent endpoint for communication.

Cluster IP: Cluster IP is the default service type in Kubernetes. It exposes the Service internally within the cluster, allowing pods to communicate securely using a virtual IP address.

Load Balancing: Kubernetes automatically distributes traffic across multiple pod replicas using Services. This ensures high availability and balanced workload distribution across the cluster.

Kubernetes vs Docker Swarm

Understanding the difference between Kubernetes and Docker Swarm helps in choosing the right container orchestration platform based on scale and operational needs.

| Factor | Kubernetes | Docker Swarm |

| Scalability | Designed for large-scale clusters with advanced auto scaling features | Suitable for small to medium clusters with simpler scaling |

| Deployment | Uses declarative configuration with YAML files and advanced rollout strategies | Simpler deployment using Docker CLI commands |

| Networking | Advanced networking model with Services, Cluster IP, and load balancing | Simpler built-in networking with overlay networks |

| Community Support | Large open source community and strong enterprise adoption | Smaller community compared to Kubernetes |

| Complexity | More complex to set up and manage | Easier to learn and configure initially |

| Use Case | Large scale microservices and cloud native applications | Small projects and simpler container orchestration needs |

Advantages and Challenges of Kubernetes Architecture

Kubernetes architecture provides powerful automation and scalability features, but it also introduces operational complexity that requires proper expertise.

Advantages

- Automatic scaling – Kubernetes can automatically scale pods up or down based on workload demand using autoscaling mechanisms.

- Self-healing – If a pod or container fails, Kubernetes automatically restarts or replaces it to maintain the desired state.

- Rolling updates – Applications can be updated gradually without downtime, ensuring smooth deployment of new versions.

- Load balancing – Traffic is automatically distributed across multiple pod replicas to ensure high availability.

- Efficient resource utilization – Kubernetes optimizes resource allocation across nodes to maximize cluster efficiency.

Challenges and Limitations

- Learning curve – Understanding Kubernetes concepts such as pods, deployments, networking, and controllers requires time and practice.

- Operational complexity – Managing clusters, monitoring performance, and handling upgrades can be complex in large-scale systems.

- Resource overhead – Running Kubernetes itself consumes system resources, especially in small environments.

- Debugging difficulty – Troubleshooting distributed containerized applications across multiple nodes can be challenging.

Real World Use Cases

Kubernetes architecture is widely adopted in modern distributed systems where scalability and automation are essential.

Cloud native applications: Kubernetes manages containerized applications designed for cloud environments. It ensures scalability, resilience, and efficient resource allocation across clusters.

Microservices deployment: Each microservice runs in separate pods, allowing independent scaling, updates, and fault isolation within large distributed systems.

CI CD pipelines: Kubernetes automates application deployment during continuous integration and delivery workflows, ensuring smooth rollout and rollback of new versions.

Large-scale SaaS platforms: Software as a Service platforms use Kubernetes to handle high user traffic, maintain availability, and scale infrastructure dynamically based on demand.

Important Concepts to Remember

- Control plane vs worker node

- Pod vs container

- ReplicaSet vs Deployment

- Horizontal Pod Autoscaling

- Rolling updates

If you want to test your understanding of container orchestration concepts, try solving Kubernetes MCQ questions that cover cluster components, pods, nodes, and orchestration fundamentals.

These Kubernetes interview questions for practice help reinforce concepts commonly asked in DevOps and cloud infrastructure interviews.

Final Words

Kubernetes architecture automates container orchestration using a control plane and worker node model. It enables scalable, resilient, and cloud native application deployment. Proper configuration ensures efficient resource management.

Explore More Architecture Blogs

- Database Management System

- Microservices

- Web Application

- REST API and API Gateway

- Distributed Systems

- OSI Security Model

- Cloud Computing

- Docker

- SAP

- SQL Server

- Spring Boot

- Data Warehouse

- Java

- Linux

- Angular

- Selenium

FAQs

Kubernetes architecture is a cluster-based container orchestration system that automates deployment, scaling, networking, and management of containerized applications across multiple nodes.

Control plane components include the API Server, etcd, Controller Manager, and Scheduler, which manage cluster state and coordinate workloads.

A Pod is the smallest deployable unit in Kubernetes that contains one or more containers sharing network and storage resources.

Kubernetes ensures high availability through pod replication, self-healing, automatic restarts, and load balancing across worker nodes.

etcd is a distributed key-value store that maintains cluster configuration, state information, and desired system settings.

Docker is a container runtime platform, while Kubernetes is an orchestration system that manages and scales containers across clusters.

Related Posts

Difference between Main memory and Secondary memory

Have you ever noticed that unsaved work disappears after a power failure, while your documents, photos, and applications remain available …

Warning: Undefined variable $post_id in /var/www/wordpress/wp-content/themes/placementpreparation/template-parts/popup-zenlite.php on line 1050